Whats On The Wire Choosing The Best Approach To Network Monitoring

What’s on the Wire: Choosing the Best Approach to Network Monitoring

Network monitoring is the cornerstone of maintaining a robust, secure, and efficient IT infrastructure. Without a clear understanding of network traffic, performance metrics, and potential issues, organizations are flying blind, risking downtime, security breaches, and a degraded user experience. The sheer volume and complexity of modern networks necessitate sophisticated monitoring strategies, and the choice of approach significantly impacts an organization’s ability to proactively identify and resolve problems. This article delves into the various facets of network monitoring, exploring the core principles, popular methodologies, and the critical factors to consider when selecting the optimal solution.

At its heart, network monitoring aims to provide visibility into network activity. This visibility manifests in several key areas: performance, availability, and security. Performance monitoring focuses on how well the network is functioning. This includes tracking metrics like bandwidth utilization, latency, packet loss, and jitter. A high latency between critical servers, for instance, can cripple application performance even if the network is technically "up." Availability monitoring, on the other hand, is concerned with whether network devices and services are accessible and operational. This involves checking if routers, switches, firewalls, servers, and applications are responding to requests. Downtime, even for a short period, can translate into significant financial losses and reputational damage. Security monitoring is paramount in today’s threat landscape. It involves detecting suspicious traffic patterns, unauthorized access attempts, and potential malware infections that could compromise sensitive data and disrupt operations.

Several foundational protocols and technologies underpin most network monitoring solutions. Simple Network Management Protocol (SNMP) remains a ubiquitous standard for collecting information from network devices. SNMP-enabled devices expose management information bases (MIBs) that contain a wealth of data, from device status and hardware inventory to traffic statistics and error rates. Monitoring tools poll these MIBs at regular intervals to gather this information. NetFlow, sFlow, and IPFIX are other critical technologies that provide flow-based network traffic analysis. Instead of simply counting packets or bytes, these protocols record metadata about network conversations, such as source and destination IP addresses, ports, protocols, and the amount of data transferred. This granular visibility into traffic patterns is invaluable for understanding application usage, identifying bandwidth hogs, and detecting anomalous behavior.

Beyond these foundational elements, network monitoring approaches can be broadly categorized into several key types, each with its own strengths and applications. Agent-based monitoring involves installing software agents on individual servers, workstations, or network devices. These agents collect detailed performance metrics, log files, and process information directly from the host system and transmit it to a central monitoring server. Agent-based solutions offer deep insights into the health and performance of individual endpoints and applications running on them, providing a microscopic view of system behavior. They are particularly useful for monitoring complex applications, server resource utilization (CPU, memory, disk I/O), and the status of services. However, agent deployment and management can be resource-intensive and require administrative privileges on each monitored device, posing challenges in highly dynamic or distributed environments.

Agentless monitoring, conversely, relies on protocols like SNMP, WMI (Windows Management Instrumentation), SSH, and HTTP to gather data from network devices and servers without requiring any software installation on the target. This approach is less intrusive and easier to deploy, making it ideal for managing a large number of devices or for environments where installing agents is impractical or undesirable. Agentless tools can monitor device uptime, basic performance metrics like CPU and memory utilization on servers, and the operational status of network infrastructure components. While simpler to implement, agentless monitoring may offer a less detailed view of application-specific performance compared to agent-based methods.

Synthetic transaction monitoring, also known as proactive monitoring, simulates user interactions with applications and services to measure their performance and availability from an end-user perspective. Scripts are created to mimic typical user actions, such as logging into a web application, performing a search, or completing a transaction. These scripts are executed from various geographical locations and at predefined intervals, reporting on response times, transaction success rates, and potential errors. Synthetic monitoring is crucial for ensuring that critical business applications are functioning as expected for actual users, even before they report an issue. It helps identify performance bottlenecks that might not be apparent from traditional network infrastructure monitoring alone.

Real User Monitoring (RUM) captures and analyzes the actual behavior of end-users as they interact with applications. By deploying a small JavaScript snippet on web pages or integrating SDKs into mobile applications, RUM tools collect data on page load times, user navigation paths, JavaScript errors, and application performance from the user’s browser or device. RUM provides invaluable insights into the actual user experience, identifying areas of frustration or underperformance that synthetic monitoring might miss. It helps pinpoint issues specific to certain browsers, devices, or network conditions experienced by real users. The combination of synthetic and RUM provides a comprehensive view of application performance from both a proactive and reactive standpoint.

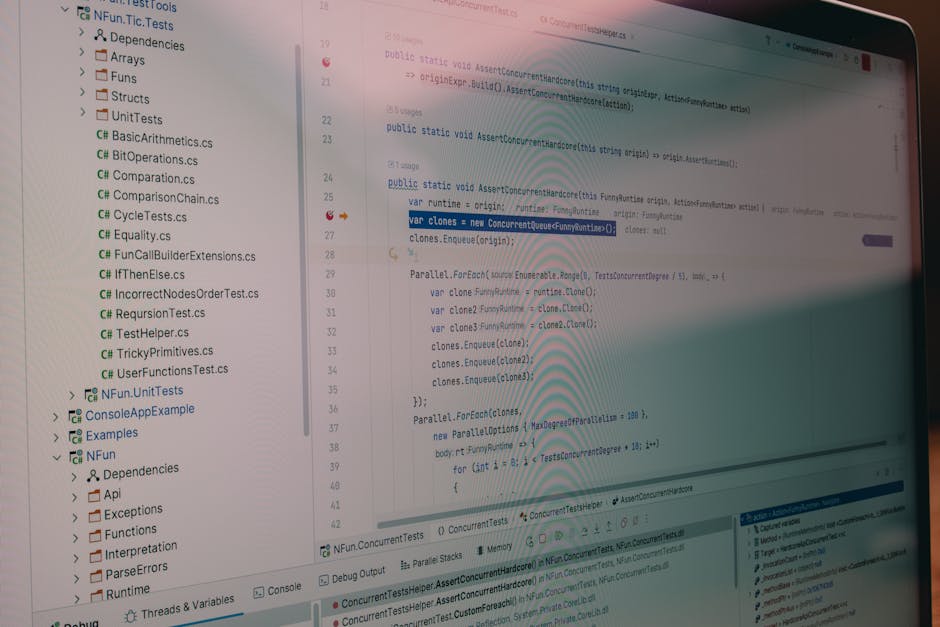

Packet capture and analysis, often referred to as deep packet inspection (DPI), involves intercepting and examining the raw data packets traversing the network. This provides the most granular level of visibility, allowing for the analysis of protocol behavior, payload content (while respecting privacy concerns), and the identification of subtle network anomalies that might evade other monitoring methods. Packet analysis is essential for deep-dive troubleshooting of complex network issues, security incident investigations, and performance optimization. Tools like Wireshark are indispensable for this purpose, enabling network engineers to dissect network traffic at the packet level. However, capturing and analyzing continuous packet traffic for an entire network can be extremely data-intensive and resource-heavy.

The choice of the best network monitoring approach is not a one-size-fits-all decision. It hinges on several critical factors tailored to an organization’s specific needs and circumstances.

Firstly, Scope and Scale are paramount. The size and complexity of the network are primary drivers. A small business with a few servers and workstations will have very different monitoring requirements than a multinational corporation with thousands of devices across multiple data centers and cloud environments. Larger, more distributed networks often necessitate a layered approach combining agentless monitoring for infrastructure and agent-based monitoring for critical servers and applications, potentially augmented by cloud-native monitoring solutions.

Secondly, Criticality of Services and Applications dictates the level of detail and proactivity required. Applications that are core to business operations, such as e-commerce platforms, financial trading systems, or critical patient care systems, demand stringent monitoring with low latency detection and rapid response capabilities. This often translates to a heavier reliance on synthetic transaction monitoring and RUM to ensure a flawless user experience. Less critical applications might be adequately served by basic availability and performance checks.

Thirdly, Budget and Resources play a significant role. Comprehensive monitoring solutions can represent a substantial investment in terms of software licensing, hardware infrastructure, and skilled personnel. Organizations must balance their monitoring needs against their financial and human capital constraints. Open-source tools can be a cost-effective starting point for smaller organizations, but they often require more in-house expertise for setup, configuration, and ongoing maintenance. Commercial solutions typically offer more robust features, dedicated support, and a more streamlined user experience, but at a higher price point.

Fourthly, Security Requirements are increasingly driving monitoring strategies. The rise of sophisticated cyber threats necessitates robust security monitoring capabilities, including intrusion detection and prevention systems (IDPS), security information and event management (SIEM) solutions, and advanced threat analytics. Network monitoring tools that can integrate with or include these security functionalities are becoming indispensable. Visibility into traffic patterns, the ability to detect anomalies, and rapid alerting for suspicious activities are critical components of a comprehensive security posture.

Fifthly, Integration Capabilities are crucial for a holistic view. Modern IT environments are complex, often comprising on-premises infrastructure, multiple cloud providers, and a variety of software-as-a-service (SaaS) applications. The chosen monitoring solution should be capable of integrating with existing tools, such as ITSM platforms, ticketing systems, and configuration management databases (CMDBs). Seamless integration allows for automated workflows, streamlined incident management, and a unified dashboard for a comprehensive overview of the IT landscape. API-driven integration is key here, enabling data sharing and automation between different systems.

Sixthly, Ease of Deployment and Management should not be overlooked. A monitoring solution, no matter how powerful, will be ineffective if it is too complex to set up, configure, or maintain. The learning curve for the IT team, the effort required for ongoing tuning and maintenance, and the availability of user-friendly interfaces are all important considerations. Solutions that offer intuitive dashboards, automated discovery of network devices, and clear alerting mechanisms can significantly reduce operational overhead.

Seventhly, Reporting and Analytics capabilities are essential for demonstrating value, identifying trends, and informing strategic decisions. The ability to generate customizable reports on network performance, availability, capacity, and security is crucial. Advanced analytics, including predictive modeling and root cause analysis, can further empower IT teams to proactively address potential issues before they impact users. Visualizations, such as network maps, performance graphs, and heatmaps, are vital for conveying complex data in an easily understandable format.

Finally, Scalability and Future-Proofing are critical for long-term effectiveness. The IT landscape is constantly evolving. Network monitoring solutions should be able to scale with the organization’s growth and adapt to new technologies and architectures, such as the increasing adoption of IoT devices, edge computing, and containerized environments. Choosing a solution that is flexible and extensible will prevent the need for costly rip-and-replace cycles in the future. Cloud-native monitoring solutions, for instance, often offer inherent scalability and adaptability.

In conclusion, the "what’s on the wire" question is fundamental to effective IT operations. Choosing the best approach to network monitoring requires a thorough understanding of the organization’s unique environment, critical services, security posture, and resource constraints. A multi-faceted approach that combines various monitoring methodologies – agent-based and agentless, synthetic and real user monitoring, and potentially packet analysis – often provides the most comprehensive and effective solution. By carefully considering the factors outlined above and prioritizing a solution that offers deep visibility, proactive alerting, actionable insights, and seamless integration, organizations can significantly enhance their network’s reliability, security, and overall performance, ultimately driving business success. The continuous evolution of network technologies demands a dynamic and adaptable monitoring strategy, ensuring that IT teams remain one step ahead of potential disruptions.